How to Build a Defensible Residual Value Case for Your Next Audit or Capital Committee

Most residual value cases fall apart not because the math is wrong but because the inputs cannot be traced, the methodology is not consistent, and the benchmark was frozen in time six months before anyone asked a question about it.

If you are heading into an audit or presenting to a capital committee, that is not a position you want to be in. Auditors and credit risk underwriters are not testing your confidence. They are testing your evidence. And right now, most teams are defending dynamic, market-driven numbers with static tools: spreadsheets that hide assumptions, consultant reports that age overnight, and depreciation schedules that treat time as a proxy for value. This is how to fix that.

Why Residual Value Assumptions Get Challenged

Residual value assumptions drive bond sizing, financing terms, decommissioning reserves, and exit valuations. One director of asset management in utility-scale solar noted that his team used to spend $10,000 and wait six weeks just to get a single number from an engineering study. That number was already ageing by the time it reached the committee.

The challenge is structural. Capital committees do not push back on residual value because your number is wrong. They push back because they cannot verify how you arrived at it. The three most common failure points are:

- Assumption drift — your benchmark was accurate when set, but the market moved and your inputs did not

- Single-point estimates — a precise number with no range signals that you have not stress-tested the downside

- Opaque methodology — if the inputs are not traceable to real transactions, the conclusion is not auditable

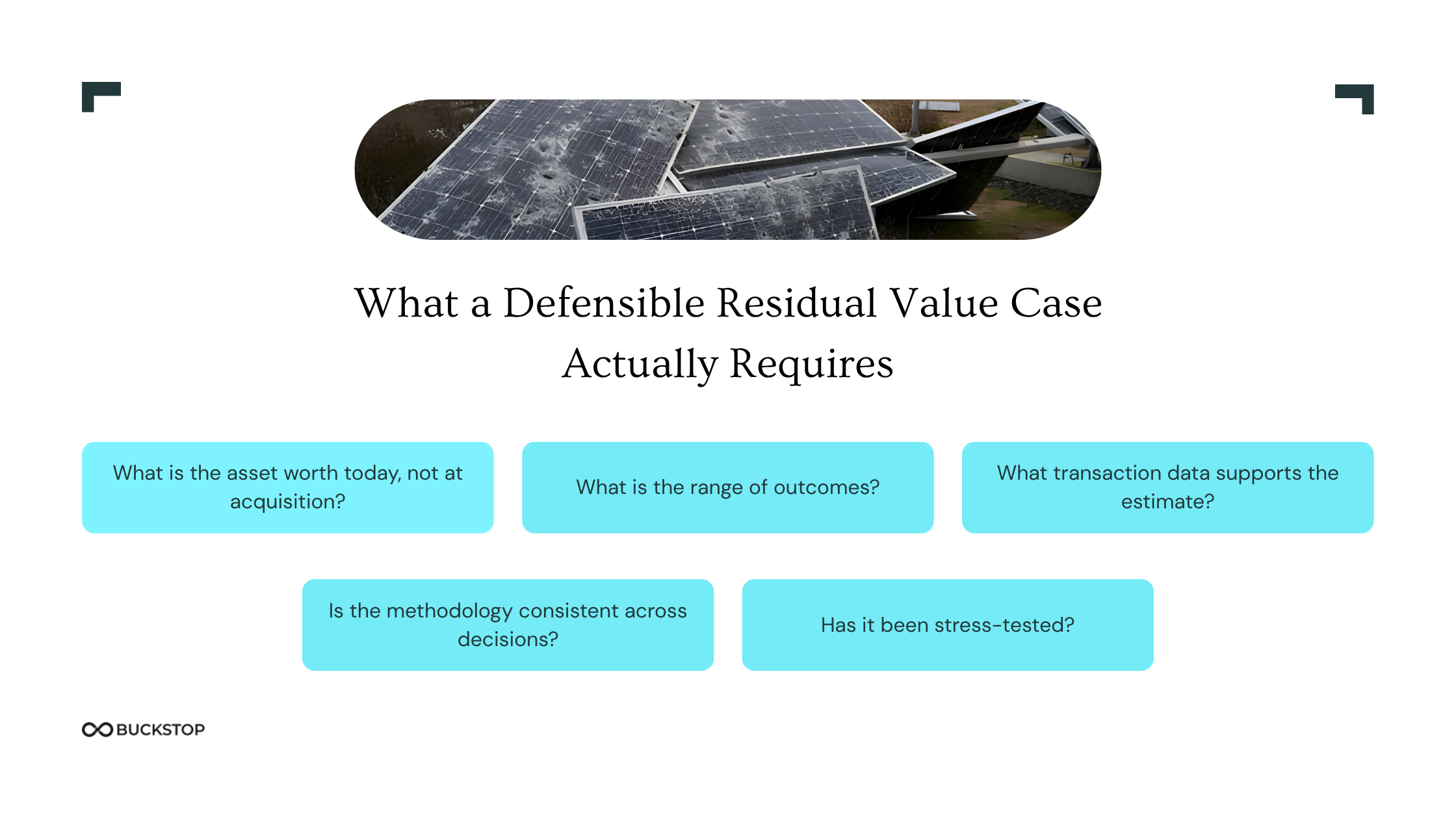

What a Defensible Residual Value Case Actually Requires

Defensibility is not about being conservative. It is about being traceable, consistent, and grounded in transaction-backed residual value data. A case that holds up answers five things clearly.

What is the asset worth today, not at acquisition? Residual value at end of life is determined by what buyers actually pay in resale and scrap markets, not by an accounting schedule. Your benchmark needs to reflect current market behavior, not original cost minus depreciation.

What is the range of outcomes? A well-reasoned range anchored to real data is more defensible than a single precise number. It shows you understand the distribution of outcomes and have not cherry-picked the optimistic case. This matters especially for asset end of life valuation methodology reviews, where auditors expect scenario coverage.

What transaction data supports the estimate? Observed pricing from active resale platforms and verified scrap transactions is the most defensible input available. Not published price lists. Not manufacturer residual tables. Actual transactions with enough volume to be statistically meaningful.

Is the methodology consistent across decisions? If your residual value benchmarking for industrial assets uses one approach in Q1 and a different one in Q3 with no documented rationale, you have an assumption drift problem that is hard to explain to anyone sitting across the table from you.

Has it been stress-tested? The most credible presentations show what happens when the market softens by 20%, when the exit is delayed, or when buyer pool depth contracts. Residual value risk capital planning requires downside modelling, not just a base case.

The Approaches That Create Audit Risk

Engineering studies are slow, expensive, and point-in-time. By the time a study reaches your committee, market conditions may have already shifted. Spreadsheet models embed assumptions in cells that are rarely documented and impossible to benchmark externally. Static depreciation schedules treat time as the primary driver of value, ignoring real market behaviour entirely.

None of these tools were built to be interrogated. And that is exactly what audits and capital committees do.

How to Build the Case That Holds Up

Start with continuously refreshed inputs. Residual value benchmarks need to be built from observed resale pricing across active marketplaces, scrap floor values from recycling aggregators, and verified transaction data where available. Each layer serves a different purpose. Marketplace data captures live demand. Scrap floors anchor your downside. Verified transactions ground the analysis in what buyers actually paid, not what sellers listed.

For teams managing solar asset residual value financing or renewable energy asset recovery, this is especially important. Secondary markets for energy equipment move on commodity prices, technology cycles, and policy changes, none of which a static model captures.

Normalize before you calculate. Raw data from multiple sources carries inconsistencies in how assets are described, categorized, and priced. Automated data cleaning and entity resolution removes that noise before it propagates into your outputs. Clean inputs are the difference between a benchmark and a guess.

Present a range, not a point. Document your base case, your downside, and your floor. Show the stress test assumptions. Make the methodology explicit enough that someone who did not build the model can follow it. This is what transforms a residual value analysis into something auditable.

Apply the same benchmark consistently. Using the same inputs and assumptions across decisions is not just good practice. It is what makes your outputs defensible over time. Consistency is evidence that you have a system, not just a spreadsheet.

Buckstop is built for exactly this problem. Teams managing capital, risk, and value recovery use the platform to replace static analysis with transaction-backed residual value intelligence that holds up when it matters most. Book a demo to see how it works.

FAQs

What does a defensible residual value case include?

It includes transaction-backed benchmark data, a documented methodology, a range of outcomes with downside scenarios, and consistency of inputs across decisions. A single-point estimate with no supporting data is the weakest possible position in an audit or capital committee review.

How do capital committees evaluate residual value assumptions?

They look for traceability, consistency, and market grounding. They want to know where the number came from, whether the methodology has been applied consistently, and whether the inputs reflect current market conditions rather than historical assumptions.

What data do I need to defend residual value in an audit?

You need observed resale pricing from active marketplaces, scrap floor values from recycling channels, and ideally verified transaction data. The inputs need to be current, normalized, and documented. Static consultant reports or depreciation schedules alone are rarely sufficient.

What makes residual value benchmarking for industrial assets different from standard depreciation?

Standard depreciation is an accounting convention tied to original cost and time. Residual value benchmarking for industrial assets reflects actual secondary market behaviour what buyers pay, what scrap channels recover, and how asset-specific attributes like manufacturer, configuration, and condition affect recoverability.

What is a residual value intelligence platform, and why does it matter?

A residual value intelligence platform replaces one-off studies and spreadsheet models with a continuously refreshed, transaction-backed system of record. It gives asset managers, capital teams, and underwriters a benchmark they can defend because it is grounded in real market outcomes, not assumptions.

.png)